Hard, transparent plastics like plexiglass, polycarbonate and polystyrene resemble glasses, and technically that’s what they are – a state of matter that has a liquid-like lack of regular order at the molecular scale, but which still displays the rigidity and lack of ability to flow that we expect from a solid. In the glassy state the polymer molecules are locked into position, unable to slide past one another. If we heat these materials up, they have a relatively sharp transition into a (rather sticky and viscous) liquid state; for both plexiglass and polystyrene this happens around 100 °C, as you can test for yourself by putting a plastic ruler or a (polystyrene) yoghourt pot or plastic cup into a hot oven. But, things are different at the surface, as shown by a paper in this week’s Science (abstract, subscription needed for full paper; see also commentary by John Dutcher and Mark Ediger). The paper, by grad student Zahra Fakhraai and Jamie Forrest, from the University of Waterloo in Canada, demonstrates that nanoscale indentations in the surface of a glassy polymer smooth themselves out at a rate that shows that the molecules near the surface can move around much more easily than those in the bulk.

This is a question that I’ve been interested in for a long time – in 1994 I was the co-author (with Rachel Cory and Joe Keddie) of a paper that suggested that this was the case – Size dependent depression of the glass transition temperature in polymer films (Europhysics Letters, 27 p 59). It was actually a rather practical question that prompted me to think along these lines; at the time I was a relatively new lecturer at Cambridge University, and I had a certain amount of support from the chemical company ICI. One of their scientists, Peter Mills, was talking to me about problems they had making films of PET (whose tradenames are Melinex or Mylar) – this is a glassy polymer at room temperature, but sometimes the sheet would stick to itself when it was rolled up after manufacturing. This is very hard to understand if one assumes that the molecules in a glassy polymer aren’t free to move, as to get significant adhesion between polymers one generally needs the string-like polymers to mix themselves up enough at the surface to get tangled up. Could it be that the chains at the surface had more freedom to move?

We didn’t know how to measure chain mobility directly near a surface, but I did think we could measure the glass transition temperature of a very thin film of polymer. When you heat up a polymer glass, it expands, and at the transition point where it turns into a liquid, there’s a jump in the value of the expansion coefficient. So if you heated up a very thin film, and measured its thickness you’d see the transition as a change in slope of the plot of thickness against temperature. We had available to us a very sensitive thickness measuring technique called ellipsometry, so I thought it was worth a try to do the measurement – if the chains were more free to move at the surface than in the bulk, then we’d expect the transition temperature to decrease as we looked at very thin films, where the surface had a disproportionate effect.

I proposed the idea as a final year project for the physics undergraduates, and a student called Rachel Cory chose it. Rachel was a very able experimentalist, and when she’d got the hang of the equipment she was able to make the successive thickness measurements with a resolution of a fraction of an Ångstrom, as would be needed to see the effect. But early in the new year of 1993 she came to see me to say that the leukemia from which she had been in remission had returned, that no further treatment was possible, but that she was determined to carry on with her studies. She continued to come into the lab to do experiments, obviously getting much sicker and weaker every day, but nonetheless it was a terrible shock when her mother came into the lab on the last day of term to say that Rachel’s fight was over, but that she’d been anxious for me to see the results of her experiments.

Looking through the lab book Rachel’s mother brought in, it was clear that she’d succeeded in making five or six good experimental runs, with films substantially thinner than 100 nm showing clear transitions, and that for the very thinnest films the transition temperatures did indeed seem to be significantly reduced. Joe Keddie, a very gifted young American scientist then working with me as a postdoc, (he’s now a Reader at the University of Surrey) had been helping Rachel with the measurements and followed up these early results with a large-scale set of experiments that showed the effect, to my mind, beyond doubt.

Despite our view that the results were unequivocal, they attracted quite a lot of controversy. A US group made measurements that seemed to contradict ours, and in the absence of any theoretical explanation of them there were many doubters. But by the year 2000, many other groups had repeated our work, and the weight of evidence was overwhelming that the influence of free surfaces led to a decrease in the temperature at which the material changed from being a glass to being a liquid in films less than 10 nm or so in thickness.

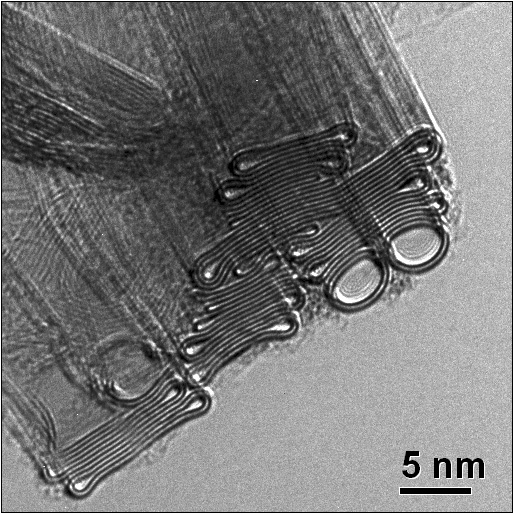

But this still wasn’t direct evidence that the chains near the surface were more free to move than they were in the bulk, and this direct evidence proved difficult to obtain. In the last few years a number of groups have produced stronger and stronger evidence that this is the case; Jamie and Zahra’s paper I think nails the final uncertainties, proving that polymer chains in the top few nanometers of a polymer glass really are free to move. Among the consequences of this are that we can’t necessarily predict the behaviour of polymer nanostructures on the basis of their bulk properties; this is going to become more relevant as people try and make smaller and smaller features in polymer resists, for example. What we don’t have now is a complete theoretical understanding of why this should be the case.