I’m off to the seaside. Normal service will resume in a couple of weeks.

Some personal views on nanotechnology, science and science policy from Richard Jones

I’m off to the seaside. Normal service will resume in a couple of weeks.

A report on the BBC News website yesterday – Antique engines inspire nano chip – discussed a new computer design based on the use of nanoscale mechanical elements, which it described as being inspired by the Victorian grandeur of Babbage’s difference engine. The work referred to comes from the laboratory of Robert Blick of the University of Wisconsin, and is published in the New Journal of Physics as A nanomechanical computer—exploring new avenues of computing (free access).

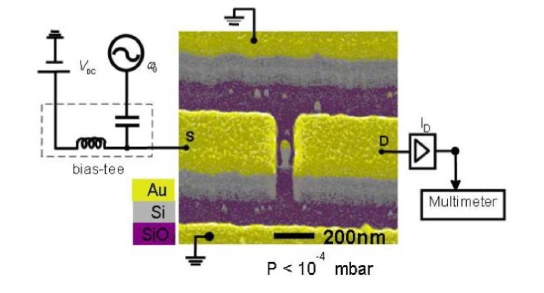

Talk of nanoscale mechanical computers and Babbage’s machine inevitably makes one think of Eric Drexler’s proposals for nanocomputers based on rod logic. However, the operating principles underlying Blick’s proposals are rather different. The basic element is a nanoelectromechanical single electron transistor (a NEMSET, see illustration below). This consists of a silicon nano-post, which oscillates between two electrodes, shuttling electrons between the source and the drain (see also Silicon nanopillars for mechanical single-electron transport (PDF)). The current is a strong function of the applied frequency, because when the post is in mechanical resonance it carries many more electrons across the gap, and the paper demonstrates how coupled NEMSETS can be used to implement logical operations.

Blick stresses that the speed of operation of these mechanical logic gates is not competitive with conventional electronics; the selling points are instead the ability to run at higher temperature (particularly if they were to be fabricated from diamond) and their lower power consumption.

Readers may be interested in Blick’s web-site nanomachines.com, which demonstrates a number of other interesting potential applications for nanostructures fabricated by top-down methods.

A nano-electromechanical single electron transistors (NEMSET). From Blick et al., New J. Phys. 9 (2007) 241.

A surprisingly large fraction of the energy used in developed countries is used heating and lighting buildings – in the European Union 40% of energy used is in buildings. This is an obvious place to look for savings if one is trying to reduce energy consumption without compromising economic activity. A few weeks ago, I reported a talk by Colin Humphreys explaining how much energy could be saved by replacing conventional lighting by light emitting diodes. A recent report commissioned by the UK Government’s Department for Environment, Food and Rural Affairs, Environmentally beneficial nanotechnology – Barriers and Opportunities (PDF file) ranks building insulation as one of the areas in which nanotechnology could make a substantial and immediate contribution to saving energy.

The problem doesn’t arise so much from new buildings; current building regulations in the UK and the EU are quite strict, and the technologies for making very heat efficient buildings are fairly well understood, even if they aren’t always used to the full. It is the existing building stock that is the problem. My own house illustrates this very well; its 3 foot thick solid limestone walls look as handsome and sturdy as when they were built 150 years ago, but the absence of a cavity makes them very poor insulators. To bring them up to modern insulating standards I’d need to dryline the walls with plasterboard with a foam-filled cavity, at a thickness that would lose a significant amount of the interior volume of the rooms. Is their some magic nanotechnology enabled solution that would allow us to retrofit proper insulation to the existing housing stock in an acceptable way?

The claims made by manufacturers of various products in this area are not always crystal clear, so its worth reminding ourself of the basic physics. Heat is transferred by convection, conduction and radiation. Stopping convection is essentially a matter of controlling the drafts. The amount of heat transmitted by conduction is proportional to the difference of temperature, the thickness of the material, and a material constant called the thermal conductivity. For solids like brick, concrete and glass thermal conductivities are around 0.6 – 0.8 W/m.K. As everyone knows, still air is a very good thermal insulator, with a thermal conductivity of 0.024 W/m.K, and the goal of traditional insulation materials, from sheeps’ wool to plastic foam, is to trap air to exploit its insulating properties. Standard building insulation is made from materials like polyurethane foam, are actually pretty good. A typical commercial product has a value of thermal conductivity of 0.021 W/m.K; it manages to do a bit better than pure air because the holes in the foam are actually filled with a gas that is heavier than air.

The best known thermal insulators are the fascinating materials known as aerogels. These are incredibly diffuse foams – their densities can be as low as 2 mg/cm3, not much more than air – that resemble nothing as much as solidified smoke. One makes an aerogel by making a cross-linked gel (typically from water soluble polymers of silica) and then drying it above the critical point of the solvent, preserving the structure of the gel in which the strands are essentially single molecules. An aerogel can have a thermal conductivity around 0.008 W/m.K. This is substantially less than the conductivity of the air it traps, essentially because the nanscale strands of material disrupt the transport of the gas molecules.

Aerogels have been known for a long time, mostly as a laboratory curiousity, with some applications in space where their outstanding properties have justified their very high expense. But it seems that there have been some significant process improvements that have brought the price down to a point where one could envisage using them in the building trade. One of the companies active in this area is the US-based Aspen Aerogels, which markets sheets of aerogel made, for strength, in a fabric matrix. These have a thermal conductivity in the range 0.012 – 0.015 W/m.K. This represents a worthwhile improvement on the standard PU foams. However, one shouldn’t overstate its impact; this means to achieve a given level of thermal insulation one needs an insulating sheet a bit more than half the thickness of a standard material.

Another product, from a company called Industrial Nanotech Inc, looks more radical in its impact. This is essentially an insulating paint; the makers claim that three layers of this material – Nansulate will provide significant insulation. If true, this would be very important, as it would easily and cheaply solve the problem of retrofitting insulation to the existing housing stock. So, is the claim plausible?

The company’s website gives little in the way of detail, either of the composition of the product or, in quantitative terms, its effectiveness as an insulator. The active ingredient is referred to as “hydro-NM-Oxide”, a term not well known in science. However, a recent patent filed by the inventor gives us some clues. US patent 7,144,522 discloses an insulating coating consisting of aerogel particles in a paint matrix. This has a thermal conductivity of 0.104 W/m.K. This is probably pretty good for a paint, but it is quite a lot worse than typical insulating foams. What, of course, makes matters much worse is that as a paint it will be applied as a very thin film (the recommended procedure is to use three coats, giving a dry thickness of 7.5 mils, a little less than 0.2 millimeters. Since one needs a thickness of at least 70 millimeters of polyurethane foam to achieve an acceptable value of thermal insulation (U value of 0.35 W/m2.K) it’s difficult to see how a layer that is both 350 times thinner than this, and with a significantly higher value of thermal conductivity, could make a significant contribution to the thermal insulation of a building.

There’s a lot of interesting recent commentary about synthetic biology on Homunculus, the consistently interesting blog of the science writer Philip Ball. There’s lots more detail about the story of the first bacterial genome transplant that I referred to in my last post; his commentary on the story was published last week as a Nature News and Views article (subscription required).

Philip Ball was a participant in a recent symposium organised by the Kavli Foundation “The merging of bio and nano: towards cyborg cells”. The participants in this produced an interesting statement: A vision for the convergence of synthetic biology and nanotechnology. The signatories to this statement include some very eminent figures both from synthetic biology and from bionanotechnology, including Cees Dekker, Angela Belcher, Stephen Chu and John Glass. Although the statement is bullish on the potential of synthetic biology for addressing problems such as renewable energy and medicine, it is considerably more nuanced than the sorts of statements reported by the recent New York Times article.

The case for a linkage between synthetic biology and bionanotechnology is well made at the outset: “Since the nanoscale is also the natural scale on which living cells organize matter, we are now seeing a convergence in which molecular biology offers inspiration and components to nanotechnology, while nanotechnology has provided new tools and techniques for probing the fundamental processes of cell biology. Synthetic biology looks sure to profit from this trend.” The writers divide the enabling technologies for synthetic biology into hardware and software. For this perspective on synthetic biology, which concentrates on the idea of reprogramming existing cells with synthetic genomes, the crucial hardware is the capability for cheap, accurate DNA synthesis, about which they write: “The ability to sequence and manufacture DNA is growing exponentially, with costs dropping by a factor of two every two years. The construction of arbitrary genetic sequences comparable to the genome size of simple organisms is now possible. “ This, of course, also has implications for the use of DNA as a building block for designed nanostructures and devices (see here for an example).

The authors are much more cautious on the software side. “Less clear are the design rules for this remarkable new technology—the software. We have decoded the letters in which life’s instructions are written, and we now understand many of the words – the genes. But we have come to realize that the language is highly complex and context-dependent: meaning comes not from linear strings of words but from networks of interconnections, with its own entwined grammar. For this reason, the ability to write new stories is currently beyond our ability – although we are starting to master simple couplets. Understanding the relative merits of rational design and evolutionary trial-and-error in this endeavor is a major challenge that will take years if not decades. “

It’s fairly clear that nanotechnology is no longer the new new thing. A recent story in Business Week – Nanotech Disappoints in Europe – is not atypical. It takes its lead from the recent difficulties of the UK nanotech company Oxonica, which it describes as emblematic of the nanotechnology sector as a whole: “a story of early promise, huge hype, and dashed hopes.” Meanwhile, in the slightly neophilic world of the think-tanks, one detects the onset of a certain boredom with the subject. For example, Jack Stilgoe writes on the Demos blog “We have had huge fun running around in the nanoworld for the last three years. But there is a sense that, as the term ‘nanotechnology’ becomes less and less useful for describing the diversity of science that is being done, interesting challenges lie elsewhere… But where?”

Where indeed? A strong candidate for the next new new thing is surely synthetic biology. (This will not, of course, be new to regular Soft Machines readers, who will have read about it here two years ago). An article in the New York Times at the weekend gives a good summary of some of the claims. The trigger for the recent prominence of synthetic biology in the news is probably the recent announcement from the Craig Venter Institute of the first bacterial genome transplant. This refers to an advance paper in Science (abstract, subscription required for full article) by John Glass and coworkers. There are some interesting observations on this in a commentary (subscription required) in Science. It’s clear that much remains to be clarified about this experiment: “But the advance remains somewhat mysterious. Glass says he doesn’t fully understand why the genome transplant succeeded, and it’s not clear how applicable their technique will be to other microbes. “ The commentary from other scientists is interesting: “Microbial geneticist Antoine Danchin of the Pasteur Institute in Paris calls the experiment “an exceptional technical feat.” Yet, he laments, “many controls are missing.” And that has prevented Glass’s team, as well as independent scientists, from truly understanding how the introduced DNA takes over the host cell.”

The technical challenges of this new field haven’t prevented activists from drawing attention to its potential downsides. Those veterans of anti-nanotechnology campaigning, the ETC group, have issued a report on synthetic biology, Extreme Genetic Engineering, noting that “Today, scientists aren’t just mapping genomes and manipulating genes, they’re building life from scratch – and they’re doing it in the absence of societal debate and regulatory oversight”. Meanwhile, the Royal Society has issued a call for views on the subject.

Looking again at the NY Times article, one can perhaps detect some interesting parallels with the way the earlier nanotechnology debate unfolded. We see, for example, some fairly unrealistic expectations being raised: ““Grow a house” is on the to-do list of the M.I.T. Synthetic Biology Working Group, presumably meaning that an acorn might be reprogrammed to generate walls, oak floors and a roof instead of the usual trunk and branches. “Take over Mars. And then Venus. And then Earth” —the last items on this modest agenda.” And just as the radical predictions of nanotechnology were underpinned by what were in my view inappropriate analogies with mechanical engineering, much of the talk in synthetic biology is underpinned by explicit, but as yet unproven, parallels between cell biology and computer science: “Most people in synthetic biology are engineers who have invaded genetics. They have brought with them a vocabulary derived from circuit design and software development that they seek to impose on the softer substance of biology. They talk of modules — meaning networks of genes assembled to perform some standard function — and of “booting up” a cell with new DNA-based instructions, much the way someone gets a computer going.”

It will be interesting how the field of synthetic biology develops, to see whether it does a better of job of steering between overpromised benefits and overdramatised fears than nanotechnology arguably did. Meanwhile, nanotechnology won’t be going away. Even the sceptical Business Week article concluded that better times lay ahead as the focus in commercialising nanotechnology moved from simple applications of nanoparticles to more sophisticated applications of nanoscale devices: “Potentially even more important is the upcoming shift from nanotech materials to applications—especially in health care and pharmaceuticals. These are fields where Europe is historically strong and already has sophisticated business networks. “

Nature this week carries an editorial about the recent flurry of activity around public engagement over nanotechnology. This is generally upbeat and approving, reporting the positive side of the messages from the final report of the Nanotechnology Engagement Group, and highlighting some of the interesting outcomes of the Nanodialogues experiments. The Software Control of Matter blog even gets a mention as a “taste of true upstream thinking by nanoscientists”.

As usual, the editorial castigates the governments of the USA and the UK for not responding to the results of this public engagement, particularly in failing to get enough research going on potential environmental and health risks of nanoparticles. “These governments and others not only need to act on this outcome of public engagement, but must also integrate such processes into their departments’ and agencies’ activities.” To be fair, I think we are beginning to see the start of this, in the UK at least.

This month’s issue of Physics World has an useful article giving an overview of the possible applications of nanotechnology to solar cells, under the strapline “Nanotechnology could transform solar cells from niche products to devices that provide a significant fraction of the world’s energy”.

The article discusses both the high road to nano-solar, using the sophistication of semiconductor nanotechnology to make highly efficient (but expensive) solar cells, and the low road, which uses dye-sensitised nanoparticles or semiconducting polymers to make relatively inefficient, but potentially very cheap, materials. One thing the article doesn’t talk about much are the issues of production and scaling, which are currently the main barriers in the way of these materials fully meeting their potential. We will undoubtedly hear much more about this over the coming months and years.