Earlier this month, Elizabeth Holmes, founder of the medical diagnostics company Theranos, was indicted on fraud and conspiracy charges. Just 4 years ago, Theranos was valued at $9 billion, and Holmes was being celebrated as one of Silicon Valley’s most significant innovators, not only the founder of one of the mythical Unicorns, but through the public value of her technology, a benefactor of humanity. How this astonishing story unfolded is the subject of a tremendous book by the journalist who first exposed the scandal, John Carreyrou. “Bad Blood” is a compelling read – but it’s also a cautionary tale, with some broader lessons about the shortcomings of Silicon Valley’s approach to innovation.

The story of Theranos

The story begins in 2003. Holmes had finished her first year as a chemical engineering student at Stanford. She was particularly influenced by one of her professors, Channing Robertson; she took his seminar on drug delivery devices, and worked in his lab in the summer. Inspired by this, she was determined to apply the principles of micro- and nano- technology to medical diagnostics, and wrote a patent application for a patch which would sample a patient’s blood, analyse it, use the information to determine the appropriate response, and release a controlled amount of the right drug. This closed loop system would combine diagnostics with therapy – hence Theranos, (from “theranostic”).

Holmes dropped out from Stanford in her second year to pursue her idea, encouraged by her professor, Channing Robertson. By the end of 2004, the company she had incorporated, with one of Robertson’s PhD students, Shaunak Roy, had raised $6 million from angels and venture capitalists.

The nascent company soon decided that the original theranostic patch idea was too ambitious, and focused on diagnostics. Holmes focused on the idea of doing blood tests on very small volumes – the droplets of blood you get from a finger prick, rather than the larger volumes you get by drawing blood with a needle and syringe. It’s a great pitch for those scared of needles – but the true promise of the technology was much wider than this. Automatic units could be placed in patients’ homes, cutting out all the delay and inconvenience of having to go to the clinic for the blood draw, and then waiting for the results to come back. The units could be deployed in field situations – with the US Army in Iraq and Afghanistan – or in places suffering from epidemics, like ebola or zika. They could be used in drug trials to continuously monitor patient reactions and pick up side-effects quickly.

The potential seemed huge, and so were the revenue projections. By 2010, Holmes was ready to start rolling out the technology. She negotiated a major partnership with the pharmacy chain Walgreens, and the supermarket Safeway had loaned the company $30 million with a view to opening a chain of “wellness centres”, built around the Theranos technology, in its stores. The US Army – in the powerful figure of General James Mattis – was seriously interested.

In 2013, the Walgreen collaboration was ready to go live; the company had paid Theranos a $100 million “innovation fee” and a $40 million loan on the basis of a 2013 launch. The elite advertising agency Chiat\Day, famous for their work with Apple, were engaged to polish the image of the company – and of Elizabeth Holmes. Investors piled in to a new funding round, at the end of which Theranos was valued at $9 billion – and Holmes was a paper billionaire.

What could go wrong? There turned out to be two flies in the ointment. Firstly, Theranos’s technology couldn’t do even half of what Holmes had been promising, and even on the tests it could do, it was unacceptably inaccurate. Carreyrou’s book is at its most compelling as he gives his own account of how he broke the story, in the face of deception, threats, and some very expensive lawyers. None of this would have come out without some very brave whistleblowers.

At what point did the necessary optimism about a yet-to-be developed technology turn first into self-delusion, and then into fraud? To answer this, we need to look at the technological side of the story.

The technology

As is clear from Carreyrou’s account, Theranos had always taken secrecy about its technology to the point of paranoia – and it was this secrecy that enabled the deception to continue for so long. There was certainly no question that they would be publishing anything about their methods and results in the open literature. But, from the insiders’ accounts in the book, we can trace the evolution of Theranos’s technical approach.

To go back to the beginning, we can get a sense of what was in Holmes’s mind at the outset from her first patent, originally filed in 2003. This patent – “Medical device for analyte monitoring and drug delivery” is hugely broad, at times reading like a digest of everything that anybody at the time was thinking about when it comes to nanotechnology and diagnostics. But one can see the central claim – an array of silicon microneedles would penetrate the skin to extract the blood painlessly, this would be pumped through 100 µm wide microfluidic channels, combined with reagent solutions, and then tested for a variety of analytes through detecting their binding to molecules attached to surfaces. In Holmes’s original patent, the idea was that this information would be processed, and then used to initiate the injection of a drug back into the body. One example quoted was the antibiotic vancomycin, which has rather a narrow window of effectiveness before side effects become severe – the idea would be that the blood was continuously monitored for vancomycin levels, which would then be automatically topped up when necessary.

Holmes and Roy, having decided that the complete closed loop theranostic device was too ambitious, began work to develop a microfluidic device to take a very small sample of blood from a finger prick, route it through a network of tiny pipes, and subject it to a battery of scaled-down biochemical tests. This all seems doable in principle, but fraught with practical difficulties. After three years making some progress, Holmes seems to have decided that this approach wasn’t going to work in time, so in 2007 the company switched direction away from microfluidics, and Shaunak Roy parted from it amicably.

The new approach was based around a commercial robot they’d acquired, designed for the automatic dispensing of adhesives. The idea of basing their diagnostic technology on this “gluebot” is less odd than it might seem. There’s nothing wrong with borrowing bits of technology from other areas, and reliably glueing things together depends on precise, automated fluid handling, just as diagnostic analysis does. But what this did mean was that Theranos no longer aspired to be a microfluidics/nanotech firm, but instead was in the business of automating conventional laboratory testing. This is a fine thing to do, of course, but it’s an area with much more competition from existing firms, like Siemens. No longer could Theranos honestly claim to be developing a wholly new, disruptive technology. What’s not clear is whether its financial backers, or its board, were told enough or had enough technical background to understand this.

The resulting prototype was called Edison 1.0 – and it sort-of worked. It could only do one class of tests – immunoassays, it couldn’t do many of these tests at the same time, and its results were not reproducible or accurate enough for clinical use. To fill in the gaps between what they promised their proprietary technology could do and its actual capabilities, Theranos resorted to modifying a commercial analysis machine – the Siemens Advia 1800 – to be able to analyse smaller samples. This was essential, to fulfil Theranos’s claimed USP, of being able to analyse the drops of blood from pin-pricks rather than the larger volumes taken for standard blood tests from a syringe and needle into a vein.

But these modifications presented their own difficulties. What they amounted to was simply diluting the small blood sample to make it go further – but of course this reduces the concentration of the molecules the analyses are looking for – often below the range of sensitivity of the commercial instruments. And there remained a bigger question, that actually hangs over the viability of the whole enterprise – can one take blood from a pin-prick that isn’t contaminated to an unknown degree by tissue fluid, cell debris and the like? Whatever the cause, it became clear that the test results Theranos were providing – to real patients, by this stage – were erratic and unreliable.

Theranos was working on a next generation analyser – the so-called miniLab – with the goal of miniaturising the existing lab testing methods to make a very versatile analyser. This project never came to fruition. Again, it was unquestionably an avenue worth pursuing. But Theranos wasn’t alone in this venture, and it’s difficult to see what special capabilities they brought that rivals with more experience and a longer track record in this area didn’t have already. Other portable analysers exist already (for example, the Piccolo Xpress), and the miniaturised technologies they would use were already in the market-place (for example, Theranos were studying the excellent miniaturised IR and UV spectrophotometers made by Ocean Optics – used in my own research group). In any case, events had overtaken Theranos before they could make progress with this new device.

Counting the cost and learning the lessons

What was the cost of this debacle? There was an human cost, not fully quantified, in terms of patients being given unreliable test results, which surely led to wrong diagnoses, missed or inappropriate treatments. And there is the opportunity cost – Theranos spent around $900 million, some of this on technology development, but rather too much on fees for lawyers and advertising agencies. But I suspect the biggest cost was the effect Theranos had slowing down and squeezing out innovation in an area that genuinely did have the potential to make a big difference to healthcare.

It’s difficult to read this story without starting to think that something is very wrong with intellectual property law in the United States. The original Theranos patent was astonishingly broad, and given the amount of money they spent on lawyers, there can be no doubt that other potential innovators were dissuaded from entering this field. IP law distinguishes between the conception of a new invention and its necessary “reduction to practise”. Reduction to practise can be by the testing of a prototype, but it can also be by the description of the invention in enough detail that it can be reproduced by another worker “skilled in the art”. Interpretation of “reduction to practise” seems to have become far too loose. Rather than giving the right to an inventor to benefit from a time-limited monopoly on an invention they’ve already got to work, patent law currently seems to allow the well-lawyered to carve out entire areas of potential innovation for their exclusive investigation.

I’m also struck from Carreyrou’s account by the importance of personal contacts in the establishment of Theranos. We might think that Silicon Valley is the epitome of American meritocracy, but key steps in funding were enabled by who was friends with who and by family relationships. It’s obvious that far too much was taken on trust, and far to little actual technical due diligence was carried out.

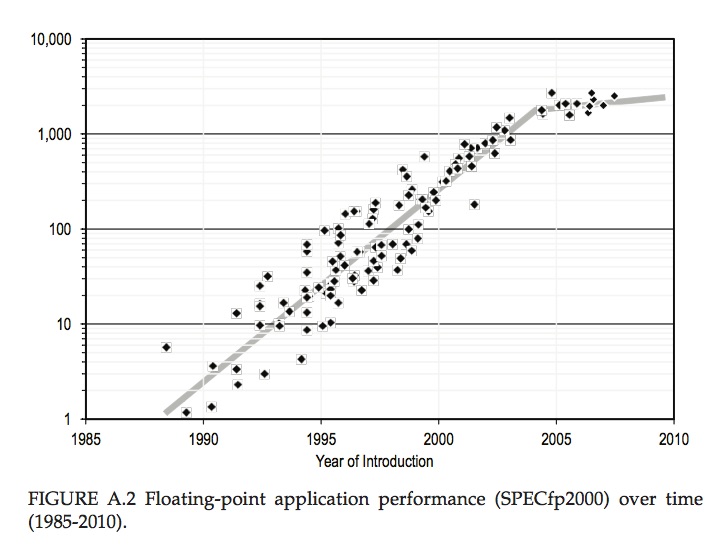

Carreyrou rightly stresses just how wrong it was to apply the Silicon Valley “fake it till you make it” philosophy to a medical technology company, where what follows from the fakery isn’t just irritation at buggy software, but life-and-death decisions about people’s health. I’d add to this a lesson I’ve written about before – doing innovation in the physical and biological realms is fundamentally more difficult, expensive and time-consuming than innovating in the digital world of pure information, and if you rely on experience in the digital world to form your expectations about innovation in the physical world, you’re likely to come unstuck.

Above all, Theranos was built on gullibility and credulousness – optimism about the inevitability of technological progress, faith in the eminence of the famous former statesmen who formed the Theranos board, and a cult of personality around Elizabeth Holmes – a cult that was carefully, deliberately and expensively fostered by Holmes herself. Magazine covers and TED talks don’t by themselves make a great innovator.

But in one important sense, Holmes was convincing. The availability of cheap, accessible, and reliable diagnostic tests would make a big difference to health outcomes across the world. The biggest tragedy is that her actions have set back that cause by many years.